Abstract

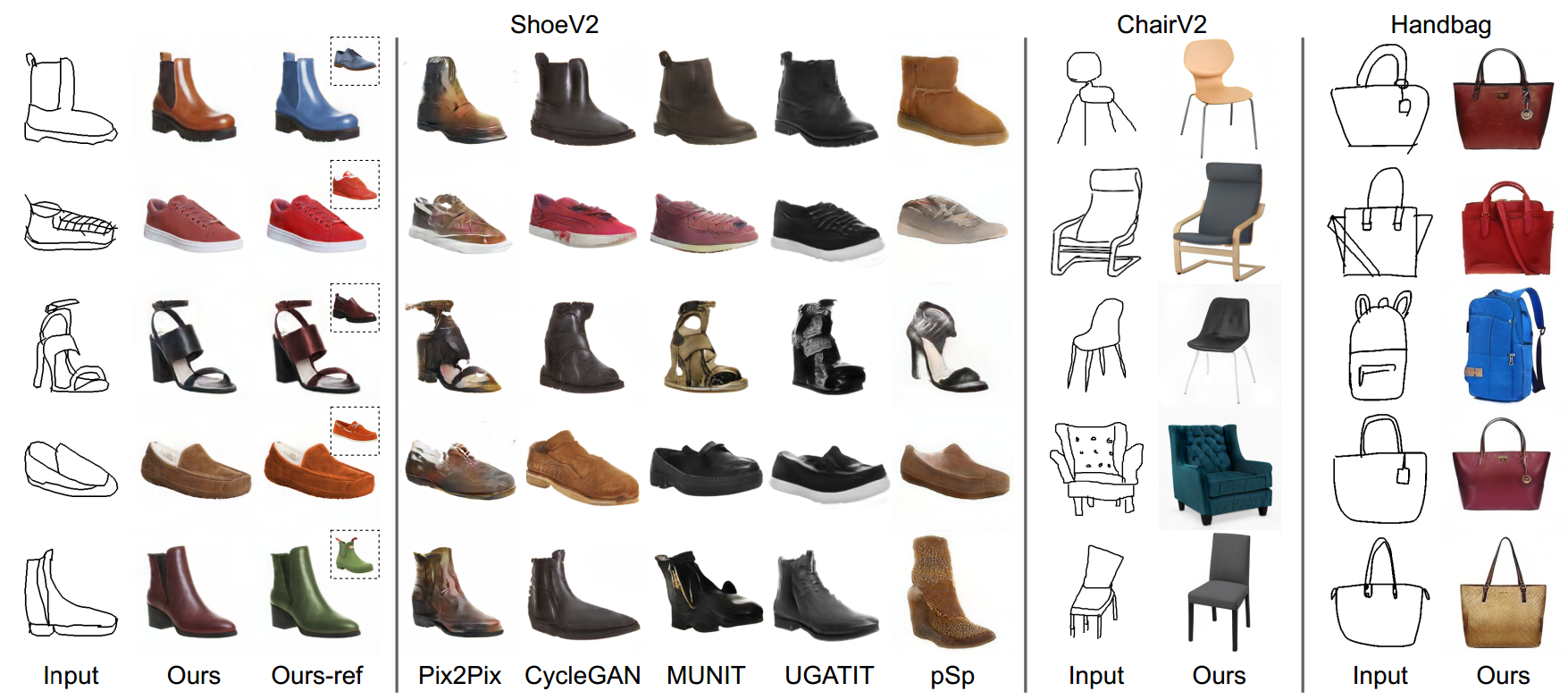

Given an abstract, deformed, ordinary sketch from untrained amateurs like you and me, this paper turns it

into a photorealistic image - just like those shown in Fig. 1(a), all non-cherry-picked. We differ

significantly from prior art in that we do not dictate an edgemap-like sketch to start with, but aim to

work with abstract free-hand human sketches. In doing so, we essentially democratise the sketch-to-photo

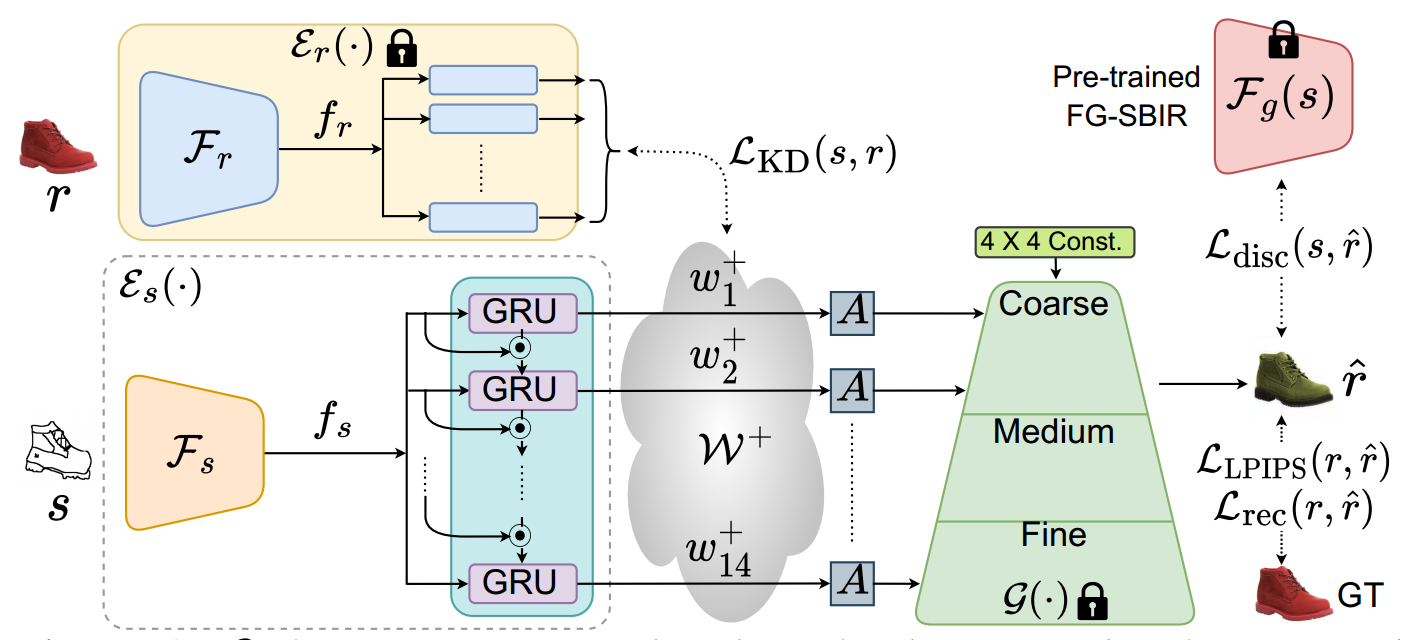

pipeline, "picturing" a sketch regardless of how good you sketch. Our contribution at the outset is a

decoupled encoder-decoder training paradigm, where the decoder is a StyleGAN trained on photos only. This

importantly ensures that generated results are always photorealistic. The rest is then all centred around

how best to deal with the abstraction gap between sketch and photo. For that, we propose an

autoregressive sketch mapper trained on sketch-photo pairs that maps a sketch to the StyleGAN latent

space. We further introduce specific designs to tackle the abstract nature of human sketches, including a

fine-grained discriminative loss on the back of a trained sketch-photo retrieval model, and a

partial-aware sketch augmentation strategy. Finally, we showcase a few downstream tasks our generation

model enables, amongst them is showing how fine-grained sketch-based image retrieval, a well-studied

problem in the sketch community, can be reduced to an image (generated) to image retrieval task,

surpassing state-of-the-arts. We put forward generated results in the supplementary for everyone to

scrutinise.